|

Users on the platform who wished to send supportive comments to other users had the option of sending AI-generated comments rather than formulating their own messages. The program used the same GPT-3 large language model that powers ChatGPT to generate therapeutic comments for users experiencing psychological distress. This suddenly widespread use of large language model chatbots has brought new urgency to questions of artificial intelligence ethics in education, law, cybersecurity, journalism, politics - and, of course, healthcare.Īs a case study on ethics, let's examine the results of a pilot program from the free peer-to-peer therapy platform Koko. Its responses are comparable to those of a well-read and overly confident medical student with poor recognition of important clinical details. In my experience, I've asked ChatGPT to evaluate hypothetical clinical cases and found that it can generate reasonable but inexpert differential diagnoses, diagnostic workups, and treatment plans. Additionally, data entered into ChatGPT is explicitly stored by OpenAI and used in training, threatening user privacy.

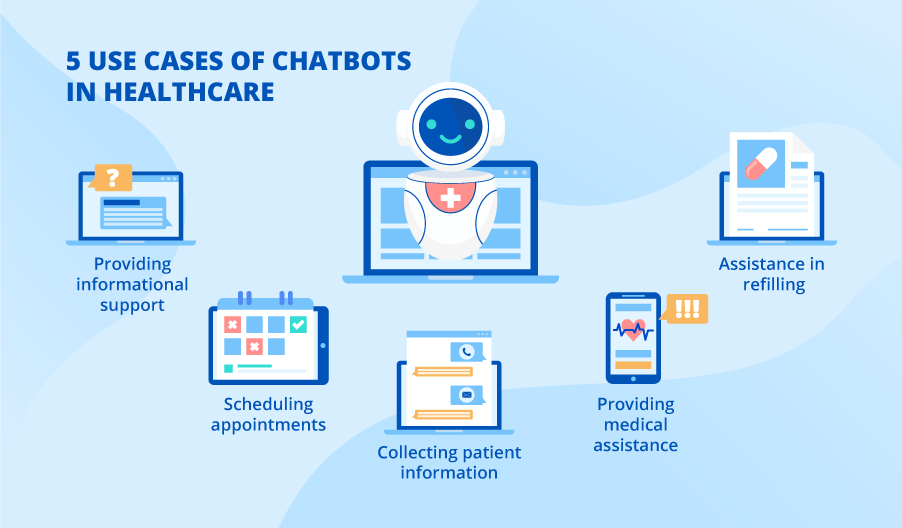

Despite significant improvements over earlier models, it has at times shown evidence of algorithmic racial, gender, and religious bias. As AI technology continues to evolve and become more sophisticated, it has the potential to revolutionize the medical industry and improve patient care.At the same time, like other large language model chatbots, ChatGPT regularly makes misleading or flagrantly false statements with great confidence (sometimes referred to as "AI hallucinations"). However, the study's findings open up a new way for AI to be used in the medical field to assist physicians in their work. It's always important to consult with a licensed medical professional for any health concerns. Just because Chat GPT gave good answers does not mean it should be relied on for medical advice. "The model that we see here is not that we replace doctors with this technology, but that AI can be used to help doctors do a better job," he said. Hollingsworth emphasized that AI chatbots should not replace doctors, but rather help them. AI can also help analyze patient data to detect patterns and provide personalized treatment plans.Īs with any technology though, there are concerns about AI's accuracy and reliability. AI chatbots and virtual assistants can help doctors with routine tasks such as scheduling appointments, ordering tests, and checking patients' medical history. "We wanted to test out how useful it would be within a medical setting," he said.Ĭhat GPT is not the only AI technology being used in the medical industry.

Dredze said that the success of Chat GPT in the study was an indication that people are using the AI chatbot for health and medical questions. The study showed that not only did Chat GPT perform well, but it was rated more favorably than the answers from doctors. He noted that the AI chatbot was not better than doctors but had the time to come up with answers, unlike human physicians who have to put in extra work to write out answers in detail. Mark Dredze, an associate professor at Johns Hopkins University and one of the minds behind the study, said that the most striking part was the detail Chat GPT was able to put into its answers. With this trend in mind, Johns Hopkins University conducted a recent study comparing medical answers from actual physicians to answers generated by the AI chatbot, Chat GPT.ĭr. People are increasingly comfortable seeking information and advice online. Why are patients turning to artificial intelligence chatbots for medical advice? 03:03īALTIMORE - The future of medicine may have arrived sooner than expected, with patients turning to artificial intelligence (AI) chatbots for medical advice.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed